The gap nobody talks about: what happens after the fix?

Everything above handles the initial validation. Detect the problem, investigate, and fix the source data. But here's where the workflow breaks down.

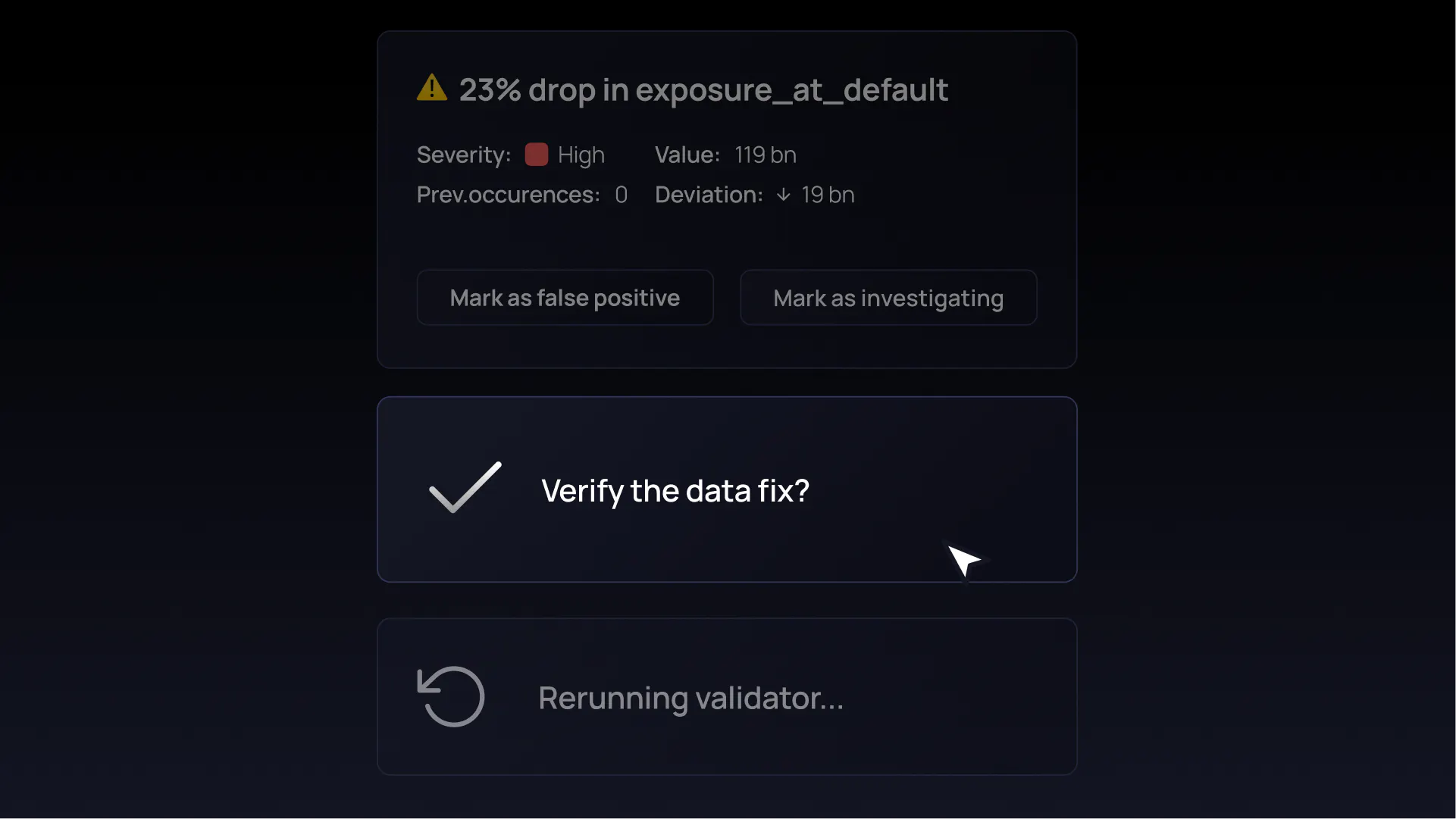

You've fixed the data. Now you need to verify the fix. What are your options?

Wait for the next check. If your validators use global windows (a full table scan each cycle), the next poll will eventually pick up the corrected data. But the result is just another data point on the chart. There's no connection between the original failure and the new value. No audit trail. No proof that a specific incident was detected and then resolved.

For tumbling windows (the kind used for daily, hourly, or weekly batches), waiting doesn't help at all. Once a window closes, it's historical. The next poll moves forward to the next time period. Monday's window had a problem? Tuesday's poll validates Tuesday's data. Monday is never re-examined.

Reset the validator. Delete all existing metrics, incidents, and history. Re-process everything from scratch. You'll get a fresh result, but you've destroyed the evidence of the original failure. For compliance purposes, this is arguably worse than not checking at all. You had a record of the problem, and you erased it.

Neither option closes the loop. Neither gives you proof that a specific problem was found, remediated, and verified.

In regulated industries, this isn't a workflow inconvenience. It's a compliance failure. Banking regulations like BCBS 239 and frameworks like SOX require evidence of the full cycle: detection, remediation, and re-verification. An auditor asking "how do you know this was fixed?" needs a better answer than "we waited for the next daily run" or "we reset everything and it looks fine now."

This is an industry-wide blind spot. Most data quality tools treat execution as a one-way street: validate, detect, alert. The remediation verification step is left entirely to manual processes: spreadsheets, screenshots, Slack messages that say "looks good now."