The evolution of data reliability

The industry has moved through, and is still moving, through three distinct phases of data reliability. Understanding where your organization currently stands is the first step to closing the trust gap and unlocking data value.

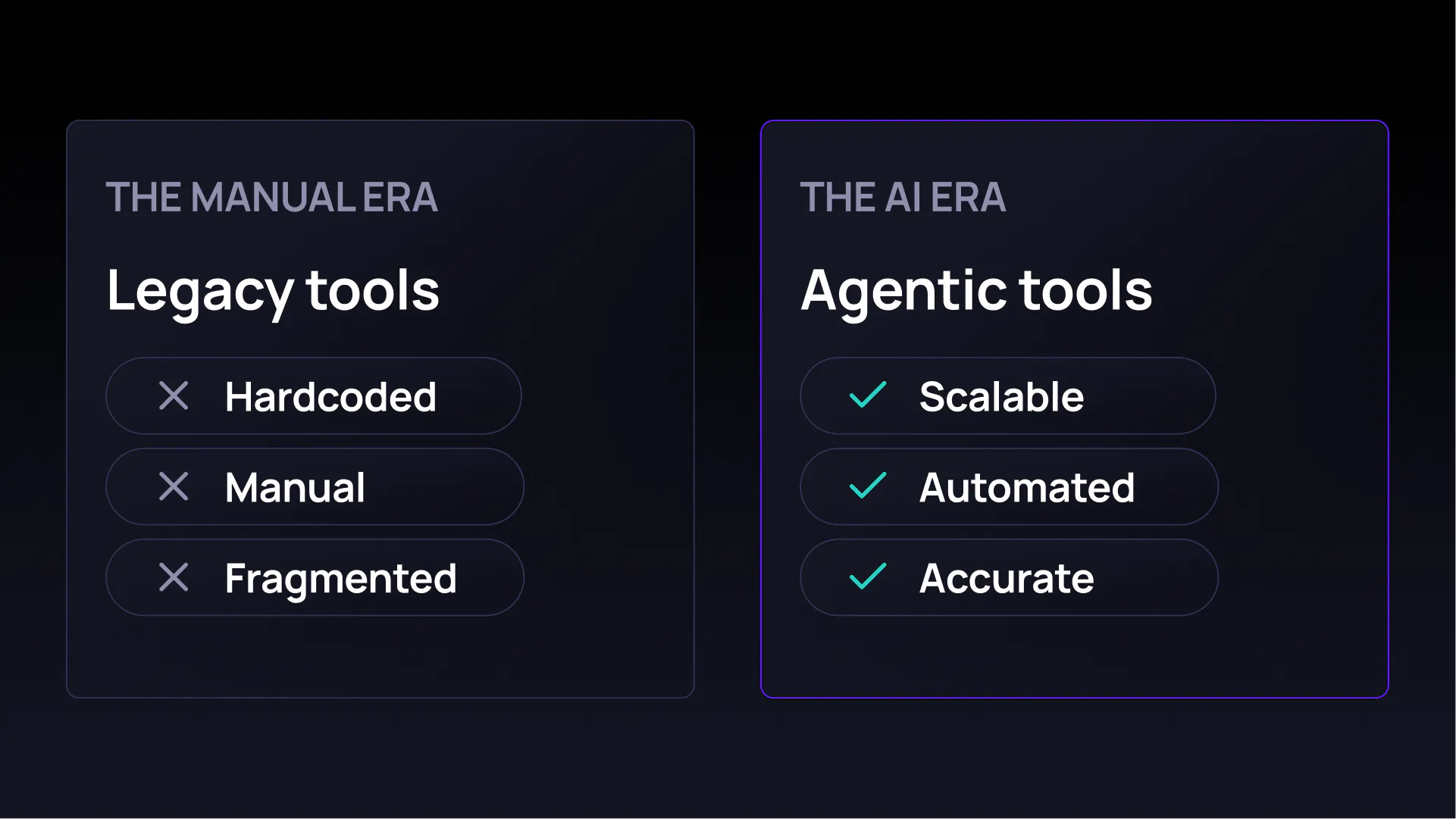

01 The manual era: legacy data quality

Legacy data tools were built for a world of static, on-premise databases. They rely on manual, human-defined and configured rules. While proving useful as a first step to understand the data landscape, there are limits when it comes to scalability, maintenance, and the ability to fully capture data inconsistencies. For example, you cannot write a rule for an “unknown unknown”. If a schema changes or a distribution shifts in a way you didn’t predict, most static tools stay silent. This creates a massive tax on data engineers, having to spend most of their time manually maintaining rules, trying to catch data failures before they impact the business.

02 The metadata era: data observability

Tools in this category moved the needle by focusing on metadata: information about the data. They intend to cover the health of data pipelines by tracking if data is there (freshness), or if tables are the right size (volume). While useful for pipeline health, metadata observability is shallow and mostly useful for data engineers trying to understand if pipeline are functioning or not. They miss the aspect of looking at actual data. And AI model can be fed a perfectly timed table full of incorrect values, and an executive dashboard can be updated, but with incorrect information.

03 The AI era: agentic data quality

Tools like Validio represents the leap into the agentic data quality era. This phase combines broad metadata observability with deep data profiling and monitoring of actual business data, for autonomous action and efficient scaling. Validio not only alerts organizations about issues in their data, but also automates the root-cause analysis, integrates lineage, and prevents issues from occurring again.