Two sides of the same coin — the nuances of real-time systems and streaming data

One of the major trends we see in the data space is the rise of real-time data and streaming data infrastructure — not only has it garnered a lot of attention within the data community, but the discussion around the real-time paradigm have also reached mainstream media with e.g. The Economist’s piece on ‘the real-time revolution’ and its impact on macroeconomics. While many industry experts remain bullish on the development, e.g. including real-time and streaming infrastructure in top Data Engineering trends or discussing the emergence of real-time ML in-depth, there are still ongoing discussions in the data community regarding the cost-benefit of real-time data today. A few nuggets from a recent LinkedIn thread discussing the concrete value include:

“Everyone wants real-time, only very few know why, and almost no one wants to pay for it ;)”

“They are essential for demos — if it’s not blinking, it’s not working”

At Validio we support both data at rest (e.g. batch data pipelines / data stored in warehouses or object stores) and data in motion (e.g. streaming pipelines / real-time use cases). The majority of our customers are still working with batch data pipelines, but we see an increasing appetite for real-time / near real-time use cases and the adoption of streaming technologies such as Kafka, Kinesis and Pub/Sub to name a few. Confluent’s recent successful IPO and the rise of startups in the space (or start-ups using language including ‘real-time’) such as Clickhouse, Rockset and Materialize are all signals of how the real-time and streaming space is on the rise.

Despite increased interest in real-time applications, what we hear from our customers and the community as we enter 2022, is that setting up and managing stream processing stacks remains cumbersome and increases complexity by an order of magnitude (or even more) vs. managing batch processing.

While we are strong believers in the secular trend of increased development and deployment of streaming data infrastructure and growth of concrete real-time use cases in the long-term, we’ll defer the detailed discussion of why we are bullish to another article. Instead, let’s look at the definition and meaning of ‘real-time’ and ‘streaming data’.

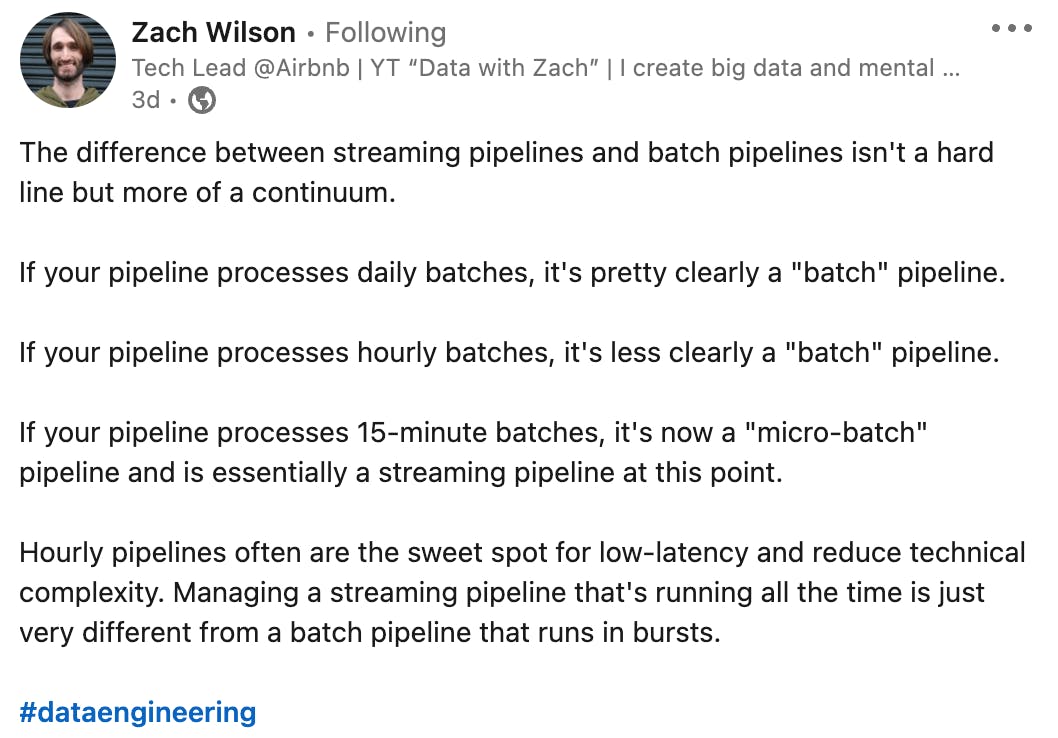

What we’ve noticed is that the terms ‘real-time’ and ‘streaming’ are often used interchangeably in discussions and conversations, whether it being Medium articles, LinkedIn posts, Hacker News/Reddit threads or discussions with our customers. In this piece, we’ll explore the distinction between the two terms and whether it makes sense to distinguish between the two.

Separating real-time systems and streaming data

Let’s go back to the basics and start by looking at the definition of the two terms:

Real-time

Everyone has an intuitive notion of what ‘real-time’ means but when it comes to defining the term everyone’s definition may differ ever so slightly. So let’s find a common point of departure and take a look at how you could define it. Wikipedia defines real-time computing as:

“Real-time programs must guarantee response within specified time constraints, often referred to as “deadlines” […] Real-time processing fails if not completed within a specified deadline relative to an event; deadlines must always be met, regardless of system load.”

Usually when we talk about real-time, we typically talk about two degrees of real-time:

- Real-time: usually in the realms of sub-milliseconds to seconds

- Near real-time: sub-seconds to hours

I.e. the word real-time is closely linked to the notion of time, as the word evidently suggests, and how fast something needs to be completed.